“We’re at the mercy of Google.” Undecided voters in the US who turn to Google may see dramatically different views of the world – even when they’re asking the exact same question.

Type in “Is Kamala Harris a good Democratic candidate”, and Google paints a rosy picture. Search results are constantly changing, but last week, the first link was a Pew Research Center poll showing that “Harris energises Democrats”. Next is an Associated Press article titled “Majority of Democrats think Kamala Harris would make a good president”, and the following links were similar. But if you’ve been hearing negative things about Harris, you might ask if she’s a “bad” Democratic candidate instead. Fundamentally, that’s an identical question, but Google’s results are far more pessimistic.

“It’s been easy to forget how bad Kamala Harris is,” said an article from Reason Magazine in the top spot. Then the US News & World Report offered positive spin about how Harris isn’t “the worst thing that could happen to America”, but the following results are all critical. A piece from Al Jazeera explained “Why I am not voting for Kamala Harris”, followed by an endless Reddit thread on why she’s no good.

You can see the same dichotomy with questions about Donald Trump, conspiracy theories, contentious political debates and even medical information. Some experts say Google is just parroting your own beliefs right back to you. It may be worsening your own biases and deepening societal divides along the way.

“We’re at the mercy of Google when it comes to what information we’re able to find,” says Varol Kayhan, an associate professor of information systems at the University of South Florida in the US.

The bias machine

“Google’s whole mission is to give people the information that they want, but sometimes the information that people think they want isn’t actually the most useful,” says Sarah Presch, digital marketing director at Dragon Metrics, a platform that helps companies tune their websites for better recognition from Google using methods known as “search engine optimisation” or SEO.

It’s a job that calls for meticulous combing through Google results, and a few years ago, Presch noticed a problem. “I started looking at how Google handles topics where there’s heated debate,” she says. “In a lot of cases, the results were shocking.”

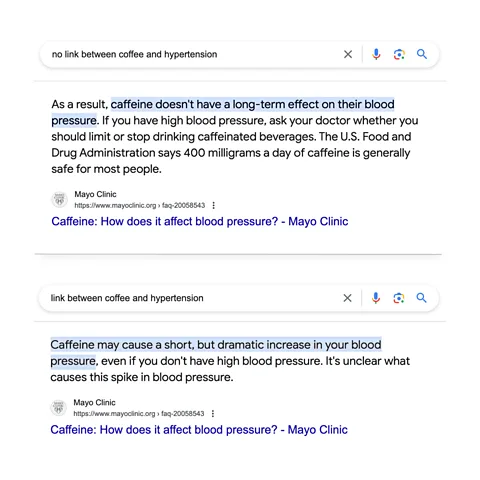

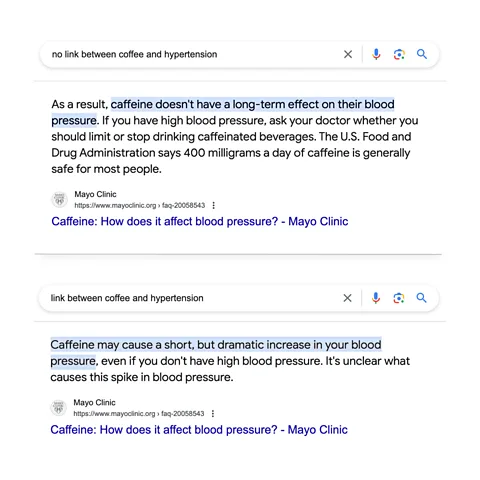

Some of the starkest examples looked at how Google treats certain health questions. Google often pulls information from the web and shows it at the top of results to provide a quick answer, which it calls a Featured Snippet. Presch searched for “link between coffee and hypertension”. The Featured Snippet quoted an article from the Mayo Clinic, highlighting the words “Caffeine may cause a short, but dramatic increase in your blood pressure.” But when she looked up “no link between coffee and hypertension”, the Featured Snippet cited a contradictory line from the very same Mayo Clinic article: “Caffeine doesn’t have a long-term effect on blood pressure and is not linked with a higher risk of high blood pressure”.

What Google has done is they’ve pulled bits out of the text based on what people are searching for and fed them what they want to read – Sarah Presch

The same thing happened when Presch searched for “is ADHD caused by sugar” and “ADHD not caused by sugar”. Google pulled up Featured Snippets that argue support both sides of the question, again taken from the same article. (In reality, there’s little evidence that sugar affects ADHD symptoms, and it certainly doesn’t cause the disorder.)

She encountered the same issue with political questions. Ask “is the British tax system fair”, and Google cites a quote from Conservative MP Nigel Huddleston, arguing that indeed it is. Ask “is the British tax system unfair”, and Google’s Featured Snippet explains how UK taxes benefit the rich and promote inequality.

“What Google has done is they’ve pulled bits out of the text based on what people are searching for and fed them what they want to read,” Presch says. “It’s one big bias machine.”

For its part, Google says it provides users unbiased results that simply match people with the kind of information they’re looking for. “As a search engine, Google aims to surface high-quality results that are relevant to the query you entered,” a Google spokesperson says. “We provide open access to a range of viewpoints from across the web, and we give people helpful tools to evaluate the information and sources they find.”

When the filter bubble pops

By one estimate, Google handles some 6.3 million queries every second, totaling more nine billion searches a day. The vast majority of internet traffic begins with a Google Search, and people rarely click on anything beyond the first five links – let alone venturing onto the second page. One study that tracked users’ eye movements found people often don’t even look at anything past the top results. The system that orders the links on Google Search has colossal power over our experience of the world.

According to Google, the company is handling this responsibility well. “Independent academic research has refuted the idea that Google Search is pushing people into filter bubbles,” the spokesperson says.

The question of so-called “filter bubbles” and “echo chambers” on the internet is a hot topic, though some research has questioned whether the effects of online echo chambers have been overstated.

But Kayhan – who has studied how search engines affect confirmation bias, the natural impulse to seek information that confirms your beliefs – says there’s no question that our beliefs and even our own political identities are swayed by the systems that control what we see online. “We’re dramatically influenced by how we receive information,” he says.

Google’s spokesperson says a 2023 study which concluded that people’s exposure to partisan news is due more to the fact that that’s what they click on, rather than Google serving up partisan news in the first place. In one sense, that’s how confirmation bias works: people look for evidence that supports their views and ignore evidence that challenges them. But even in that study, the researchers said their findings do not imply that Google’s algorithms are unproblematic. “In some cases, our participants were exposed to highly partisan and unreliable news on Google Search,” the researchers said, “and past work suggests that even a limited number of such exposures can have substantial negative impacts”.

Regardless, you might choose to engage with information that keeps you trapped in your filter bubble, “but there’s only a certain bouquet of messages that are put in front of you to choose from in the first place”, says Silvia Knobloch-Westerwick, a professor of mediated communication at Technische Universität Berlin in Germany. “The algorithms play a substantial role in this problem.”

Google did not respond to the BBC’s question whether there’s a person or a team who’s specifically tasked with addressing the problem of confirmation bias.

‘We do not understand documents – we fake it’

“In my opinion, this whole issue stems from the technical limitations of search engines, and the fact that people don’t understand what those limitations are,” says Mark Williams-Cook, founder of AlsoAsked, another search engine optimisation tool that analyses Google results.

A recent US anti-trust case against Google uncovered internal company documents where employees discuss some of the techniques the search engine uses to answer your questions. “We do not understand documents – we fake it,” an engineer wrote in a slideshow used during a 2016 presentation at the company. “A billion times a day, people ask us to find documents relevant to a query… Beyond some basic stuff, we hardly look at documents. We look at people. If a document gets a positive reaction, we figure it is good. If the reaction is negative, it is probably bad. Grossly simplified, this is the source of Google’s magic.”

“That is how we serve the next person, keep the induction rolling, and sustain the illusion that we understand.”

In other words, Google watches to see what people click on when they enter a given search term. When people seem satisfied by a certain type of information, it’s more likely that Google will promote that kind of search result for similar queries in the future.

A Google spokesperson says these documents are outdated, and the system used to decipher queries and web pages has become far more sophisticated.

“That presentation is from 2016, so you have to take it with a pinch of salt, but the underlying concept is still true. Google builds models to try and predict what people like, but the problem is this creates a kind of feedback loop,” Williams-Cook says. If confirmation bias pushes people to click on links that reinforce their beliefs, it can teach Google to show people links that lead to confirmation bias. “It’s like saying you’re going to let your kid pick out their diet based on what they like. They’ll just end up with junk food,” he says.

Williams-Cook also worries that people may not understand that when you ask something like “is Trump a good candidate”, Google may not necessarily interpret that as a question. Instead, it often just pulls up documents that relate to keywords like “Trump” and “good candidate”.

It gives people mistaken expectations about what they’re going to get when they’re searching, and that can push people to misinterpret what the search results mean, he says.

If users were more clear on the search engine’s shortcomings, Williams-Cook believes they might think about the content they see more critically. “Google should do more to inform the public about how Search actually works. But I don’t think they will, because to do that you have to admit some imperfections about what’s not working,” he says. (To learn more about the inner workings of search engines, read this article about how Google’s updates to its algorithm are changing the internet.)

Google is open about the fact that Search is never a solved problem, a company spokesperson says, and the company works tirelessly to address the deep technical challenges in the field as they crop up. Google also points to features it offers that help users evaluate information, such as the “About this result” tool and notices that let users know when results about a topic related to breaking news are changing quickly.

Philosophical problems

Google’s spokesperson says it’s easy to find results reflecting a range of viewpoints from sources all across the web, if that’s what you want to do. They argue that’s true even with some of the examples Presch pointed out. Scroll further down with questions like “is Kamala Harris a good Democratic candidate”, and you’ll find links that criticise her. The same goes for “is the British tax system fair” – you’ll find search results that say it isn’t. With the “link between coffee and hypertension” query, Google’s spokesperson says the issue is complicated but the search engine surfaces authoritative sources that delve into the nuance.

Of course, this relies on people exploring past the first few results – the further down the page of results you go, the less likely users are to engage with the links. In the case of coffee related hypertension and the British tax system, Google also summarises the results and gives its own answer prominently with Featured Snippets – which may make it less likely that people will follow links further down in the search results.

For a long time, observers have described how Google is transitioning from a search engine to an “answer engine,” where the company simply gives you the information, rather than pointing you to outside sources. The clearest example is the introduction of AI Overviews, a feature where Google uses AI to answer search queries for you, rather than pulling up links in response. As the company put it, you can now “Let Google do the searching for you“.

“In the past Google was showing you something that someone else has written, but now it’s writing the answer itself,” Williams-Cook says. “It compounds all of these problems, because now Google has just one chance to get it right. It’s a difficult move.”

But even if Google had the technical ability to address all of these problems, it isn’t necessarily clear when or how they should intervene. You may want information that backs up a particular belief, and if so, Google is providing a valuable service by delivering it to you.

Many people are uncomfortable with the idea of one of the world’s richest and most powerful companies making decisions about what the truth is, Kayhan says. “Is this Google’s job to fix it? Can we trust Google to fix itself? And is it even fixable? These are difficult questions, and I don’t think anyone has the answer, ” he says. “The one thing I can tell you for sure is that I don’t think they’re doing enough.”

* Thomas Germain is a senior technology journalist for the BBC. He’s covered AI, privacy and the furthest reaches of internet culture for the better part of a decade. You can find him on X and TikTok @thomasgermain.

Source: www.bbc.com

The post The ‘bias machine’: How Google tells you what you want to hear appeared first on Ghanaian Times.

Read Full Story

Facebook

Twitter

Pinterest

Instagram

Google+

YouTube

LinkedIn

RSS